HP Cluster Platform Introduction v2010 Using InfiniBand for a Scalable Compute

HP Cluster Platform Introduction v2010 Manual

|

View all HP Cluster Platform Introduction v2010 manuals

Add to My Manuals

Save this manual to your list of manuals |

HP Cluster Platform Introduction v2010 manual content summary:

- HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 1

for a scalable compute infrastructure Technology brief, 4th edition Introduction ...2 InfiniBand technology ...3 InfiniBand architecture...3 InfiniBand Quality of Service functions 5 InfiniBand performance ...5 Scale-out clusters built on InfiniBand and HP technology 7 HPC configuration with HP - HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 2

organizations are deploying scale-out computing using clusters for these applications: • High performance computing (HPC) • Enterprise applications • Financial services • Scale-out databases Scale-out computing uses interconnect technology to combine a large number of stand-alone server nodes into - HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 3

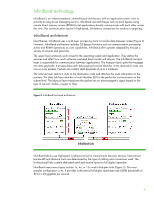

technology InfiniBand is an industry-standard, channel-based architecture with an application-centric view to provide an easy-to-use messaging service. InfiniBand uses techniques such as stack bypass using remote direct memory access (RDMA) to let applications directly communicate with each other - HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 4

a bandwidth of 2 Gb/s. The InfiniBand architecture defines a virtual lanemapping algorithm to ensure inter-operability between end nodes that support different numbers of virtual lanes. Figure 4. InfiniBand virtual lane operation Sources Targets Overhead in data transmission limits the maximum - HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 5

a clustered environment competes for fabric resources such as physical links and queues. To minimize interference and congestion, InfiniBand implements Quality of Service (QoS) functions on each virtual lane. Flow control Ethernet uses flow control as a time-based function. To avoid input overload - HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 6

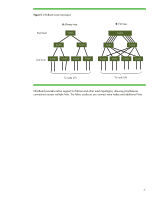

Switch Switch Leaf level Switch Switch Switch Switch Switch Switch Switch Switch To node I/Fs To node I/Fs InfiniBand provides native support for fat-tree and other mesh topologies, allowing simultaneous connections across multiple links. The fabric scales as you connect more nodes - HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 7

Scale-out clusters built on InfiniBand and HP technology Scale-out cluster computing has become the common architecture for HPC, and broader markets are adopting it. The trend is toward using space- and power-efficient systems for scale-out solutions. Figure 6 shows the key components for building a - HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 8

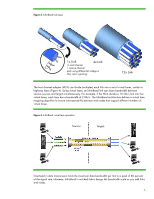

HPC configuration with HP BladeSystem solutions Figure 7 shows a full-bandwidth, fat-tree configuration of HP BladeSystem c-Class components providing 576 nodes in a cluster. Each c7000 enclosure includes an HP 4x QDR InfiniBand Switch Blade, with 16 downlinks for server blade connection and 16 QSFP - HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 9

To meet extreme density goals, the half-height HP BL2x220c server blade includes two server nodes. Each node can support two quad-core Intel® Xeon® 5400-series processors and a slot for a mezzanine board. That equals up to 32 nodes (256 cores) per c7000 enclosure. Each - HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 10

flexibility of a custom solution with the simplicity and value of a factory-built product. The HP UCP is a modular package of hardware, software, and services. You can configure HP Cluster Platforms with HP ProLiant BL, DL, or SL systems. You have a choice of packaging styles, processor types, and - HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 11

Ethernet, RDMA is a core capability of InfiniBand architecture. Flow control and congestion avoidance are native to InfiniBand. InfiniBand also includes support for fat-tree and other mesh topologies that allow simultaneous connections across multiple links. This lets the InfiniBand fabric scale as - HP Cluster Platform Introduction v2010 | Using InfiniBand for a Scalable Compute - Page 12

http://www.rdmaconsortium.org. http://h20000.www2.hp.com/bc/docs/support/SupportManual/c 00589475/c00589475.pdf http://h18004.www1.hp.com/products/blades/ for HP products and services are set forth in the express warranty statements accompanying such products and services. Nothing herein should be

Using InfiniBand for a scalable compute

infrastructure

Technology brief, 4

th

edition

Introduction

.........................................................................................................................................

2

InfiniBand technology

...........................................................................................................................

3

InfiniBand architecture

.......................................................................................................................

3

InfiniBand Quality of Service functions

................................................................................................

5

InfiniBand performance

.....................................................................................................................

5

Scale-out clusters built on InfiniBand and HP technology

...........................................................................

7

HPC configuration with HP BladeSystem solutions

.................................................................................

8

HPC-optimized HP Cluster Platforms

..................................................................................................

10

Conclusion

........................................................................................................................................

11

For more information

..........................................................................................................................

12

Call to action

.....................................................................................................................................

12