Lenovo ThinkServer RD330 MegaRAID SAS Software User Guide - Page 40

RAID Configuration, Strategies

|

View all Lenovo ThinkServer RD330 manuals

Add to My Manuals

Save this manual to your list of manuals |

Page 40 highlights

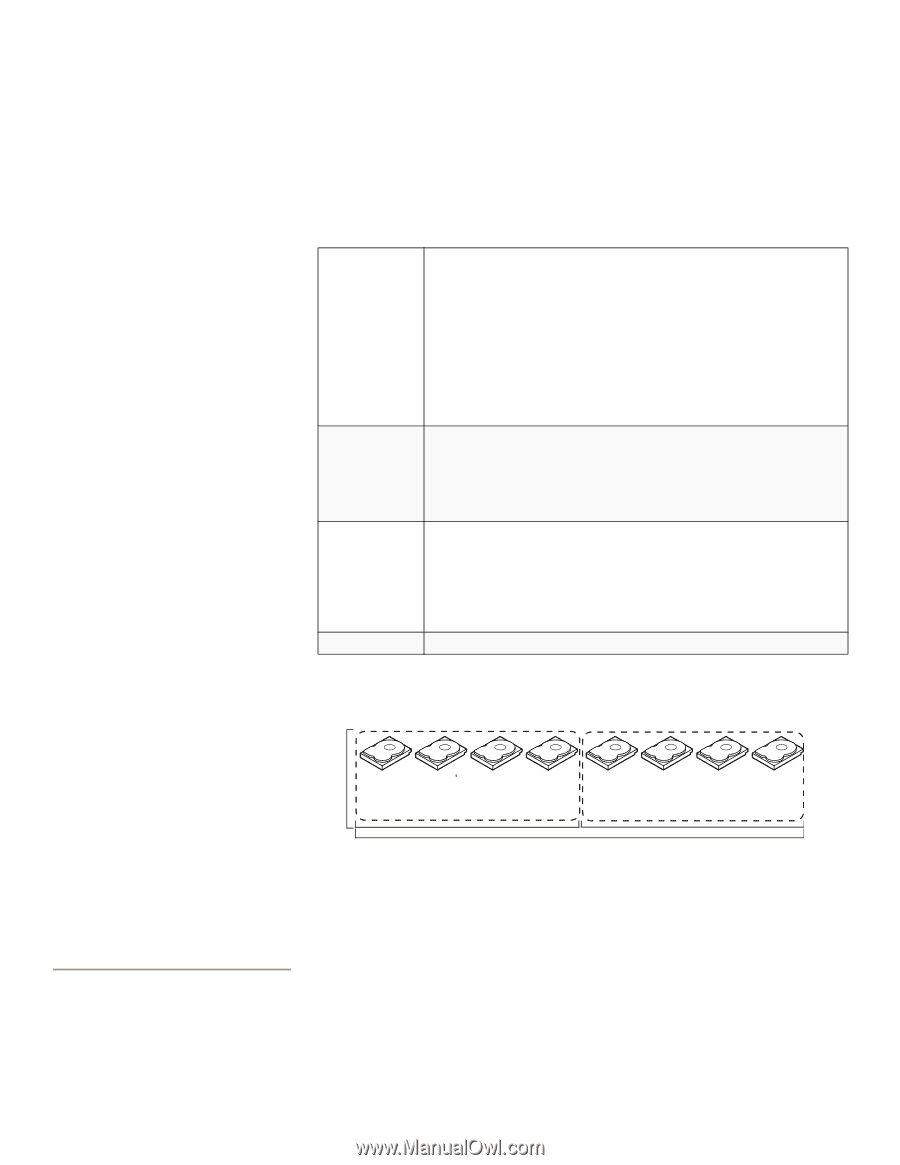

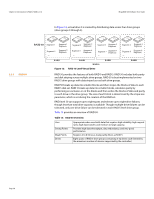

Chapter 2: Introduction to RAID | RAID Configuration Strategies MegaRAID SAS Software User Guide 2.6 RAID Configuration Strategies RAID 60 can support up to 8 spans and tolerate up to 16 drive failures, though less than total drive capacity is available. Two drive failures can be tolerated in each RAID 6 level drive group. Table 14: RAID 60 Overview Uses Strong Points Weak Points Drives Provides a high level of data protection through the use of a second parity block in each stripe. Use RAID 60 for data that requires a very high level of protection from loss. In the case of a failure of one drive or two drives in a RAID set in a virtual drive, the RAID controller uses the parity blocks to recreate all of the missing information. If two drives in a RAID 6 set in a RAID 60 virtual drive fail, two drive rebuilds are required, one for each drive. These rebuilds can occur at the same time. Use for office automation and online customer service that requires fault tolerance. Use for any application that has high read request rates but low write request rates. Provides data redundancy, high read rates, and good performance in most environments. Each RAID 6 set can survive the loss of two drives or the loss of a drive while another drive is being rebuilt. Provides the highest level of protection against drive failures of all of the RAID levels. Read performance is similar to that of RAID 50, though random reads in RAID 60 might be slightly faster because data is spread across at least one more disk in each RAID 6 set. Not well suited to tasks requiring lot of writes. A RAID 60 virtual drive has to generate two sets of parity data for each write operation, which results in a significant decrease in performance during writes. Drive performance is reduced during a drive rebuild. Environments with few processes do not perform as well because the RAID overhead is not offset by the performance gains in handling simultaneous processes. RAID 6 costs more because of the extra capacity required by using two parity blocks per stripe. A minimum of 8 Figure 14 shows a RAID 6 data layout. The second set of parity drives are denoted by Q. The P drives follow the RAID 5 parity scheme. RAID 60 Segment 1 Segment 8 Parity (Q11-Q12) Parity (P15-P16) Segment 2 Parity (Q3-Q4) Parity (P11-P12) Segment 15 Parity (Q1-Q2) Parity (P3-P4) Segment 11 Segment 16 Parity (P1-P2) Segment 7 Segment 12 Parity (Q15-Q16) Segment 3 Segment 6 Parity (Q9-Q10) Parity (P13-P14) Segment 4 Parity (Q5-Q6) Parity (P9-P10) Segment 13 Parity (Q3-Q4) Parity (P5-P6) Segment 9 Segment 14 Parity (P3-P4) Segment 5 Segment 10 Parity (Q13-Q14) RAID 6 RAID 0 Note: Parity is distribute d across all drives in the drive group. RAID 6 Figure 14: RAID 60 Level Virtual Drive The most important factors in RAID drive group configuration are: Virtual drive availability (fault tolerance) Virtual drive performance Virtual drive capacity Page 40