HP Cluster Platform Interconnects v2010 Using InfiniBand for a Scalable Comput - Page 11

Conclusion

|

View all HP Cluster Platform Interconnects v2010 manuals

Add to My Manuals

Save this manual to your list of manuals |

Page 11 highlights

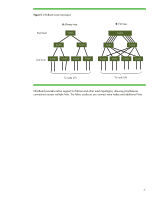

Conclusion You should base your decision to use Ethernet or InfiniBand on performance and cost requirements. We are committed to supporting both InfiniBand and Ethernet infrastructures. We want to help you choose the most cost-effective fabric solution for your environment. InfiniBand is the best choice for HPC clusters requiring scalability from hundreds to thousands of nodes. While you can apply zero-copy (RDMA) protocols to TCP/IP networks such as Ethernet, RDMA is a core capability of InfiniBand architecture. Flow control and congestion avoidance are native to InfiniBand. InfiniBand also includes support for fat-tree and other mesh topologies that allow simultaneous connections across multiple links. This lets the InfiniBand fabric scale as you connect more nodes and links. Parallel computing applications that involve a high degree of message passing between nodes benefit significantly from InfiniBand. Data centers worldwide have deployed DDR for years and are quickly adopting QDR. HP BladeSystem c-Class clusters and similar rack-mounted clusters support IB DDR and QDR HCAs and switches. 11