HP Cluster Platform Interconnects v2010 Using InfiniBand for a Scalable Comput - Page 8

HPC configuration with HP BladeSystem solutions

|

View all HP Cluster Platform Interconnects v2010 manuals

Add to My Manuals

Save this manual to your list of manuals |

Page 8 highlights

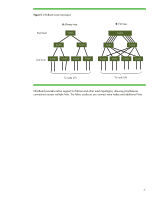

HPC configuration with HP BladeSystem solutions Figure 7 shows a full-bandwidth, fat-tree configuration of HP BladeSystem c-Class components providing 576 nodes in a cluster. Each c7000 enclosure includes an HP 4x QDR InfiniBand Switch Blade, with 16 downlinks for server blade connection and 16 QSFP uplinks for fabric connectivity. Sixteen 36-port QDR InfiniBand switches provide spine-level fabric connectivity. Figure 7. HP BladeSystem c-Class 576-node cluster configuration using BL280c blades HP c7000 Enclosure #1 HP c7000 Enclosure #2 16 HP BL280c G6 server blades w/4x QDR HCAs 16 HP QDR IB Switch Blade 16 HP BL280c G6 server blades w/4x QDR HCAs 16 HP QDR IB Switch Blade HP c7000 Enclosure #36 16 HP BL280c G6 server blades w/4x QDR HCAs 16 HP QDR IB Switch Blade 36-Port QDR IB Switch #1 36-Port QDR IB Switch #2 36-Port QDR IB Switch #16 Total nodes Racks required for servers Interconnect 576 (1 per blade) Nine 42U (assumes four c7000 enclosures per rack) 1:1 full bandwidth (non-blocking), 3 switch hops maximum, fabric redundancy 8