HP Cluster Platform Interconnects v2010 Using InfiniBand for a Scalable Comput - Page 2

Introduction, Gigabit Ethernet GbE

|

View all HP Cluster Platform Interconnects v2010 manuals

Add to My Manuals

Save this manual to your list of manuals |

Page 2 highlights

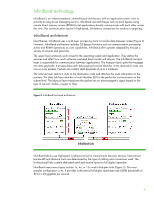

Introduction Increasingly, IT organizations are deploying scale-out computing using clusters for these applications: • High performance computing (HPC) • Enterprise applications • Financial services • Scale-out databases Scale-out computing uses interconnect technology to combine a large number of stand-alone server nodes into an integrated and centrally managed system (Figure 1). A cluster infrastructure works best when built with an interconnect technology that scales easily, reliably, and economically with system expansion. Figure 1. System architecture for a sample scale-out HPC cluster External Network Admin Switches Console Switches Service Interconnect Metadata Server OST: Object Storage Targets Computation Data Management Visualization You can now choose from three interconnect technologies to build a cluster: • Gigabit Ethernet (GbE) • 10 GbE • InfiniBand (IB) Ethernet can be cost-effective for scale-out systems that run applications with little inter-node communication. 10 GbE meets higher bandwidth requirements than GbE can provide. InfiniBand is ideal for scale-out environments where applications have extensive inter-node communication and require very low latency and high bandwidth across the entire fabric. This technology brief describes InfiniBand as an interconnect technology used in cluster computing. It describes multiple InfiniBand topologies and multiple configurations for building an HPC cluster. It also explains how InfiniBand achieves high performance in real world applications. 2