HP Cluster Platform Interconnects v2010 HP Cluster Platform InfiniBand Interco - Page 98

Topspin/Mellanox PCI-Express HCA

|

View all HP Cluster Platform Interconnects v2010 manuals

Add to My Manuals

Save this manual to your list of manuals |

Page 98 highlights

Figure 8-3 Topspin/Mellanox SDR PCI-X HCA Features of the Topspin/Mellanox PCI-X HCA include: • Two 4x InfiniBand 10 Gb/s ports (40 Gb/s InfiniBand bandwidth full duplex - 2 ports x 10 Gb/s/port x 2 for full duplex). • 64-bit PCI-X v2.2 133Mhz • 7 Gb/s transfer rates per port •

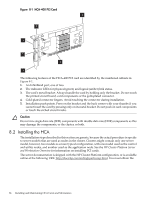

Figure 8-3 Topspin/Mellanox SDR PCI–X HCA

Features of the Topspin/Mellanox PCI-X HCA include:

•

Two 4x InfiniBand 10 Gb/s ports (40 Gb/s InfiniBand bandwidth full duplex – 2 ports x 10

Gb/s/port x 2 for full duplex).

•

64-bit PCI-X v2.2 133Mhz

•

7 Gb/s transfer rates per port

•

<6 μm latency

•

128 MB local memory

The LED ports on the Topspin/Mellanox PCI-X HCA blink to indicate traffic on the InfiniBand

link on initial setup. The installation and LEDs of the Topspin cards are similar to that of the

Voltaire HCAs described previously in this chapter.

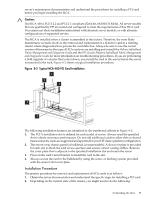

8.4 Topspin/Mellanox PCI-Express HCA

The Topspin/Mellanox PCI–Express HCA supports InfiniBand protocols including IPoIB, SDP,

SRP, UDAPL and MPI. The Topspin/Mellanox PCI–Express HCA is a single data rate (SDR) card

with two 4X InfiniBand 10 Gb/s ports and 128 MB local memory.

Figure 8-4

shows a

Topspin/Mellanox PCI–Express HCA.

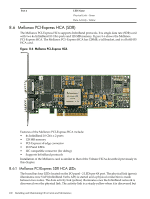

Figure 8-4 Topspin/Mellanox SDR PCI–Express HCA

Features of the Topspin/Mellanox PCI-Express HCA include:

•

Two 4x InfiniBand 10 Gb/s ports (up to 40 Gb/s InfiniBand bandwidth full duplex – 2 ports

x 10 Gb/s/port x 2 for full duplex).

•

x8 v1.0a PCI-Express (8 serial lanes x 2.5 Gb/s/lane x 2 for full duplex)

•

8 Gb/s transfer rates per port, configuration dependant

•

<5 μm latency

•

128 MB local memory

98

Installing and Maintaining HCA Cards and Mezzanines